From Mid-Band to Multi-Gigabit: How Device Capability Defines 5G Performance

Commissioned by Qualcomm Technologies, Inc

This short-form analysis compares mmWave (FR2) and sub-6 GHz (FR1) performance in Verizon’s 5G Standalone (SA) network using devices from two leading OEMs. As with our previous study, the devices are powered by chipsets from Qualcomm and Apple, representing the recent commercially available modem platforms from each vendor. Testing took place in Times Square, New York City in August 2025, an environment selected for its consistently high pedestrian density and unique RF conditions.

Devices Under Test (DUTs):

- Apple iPhone 16e – C1 modem, FR1-only, MSRP $599

- Android Device – Snapdragon X75 5G Modem-RF System, FR1+FR2, MSRP $699

Our original intent was to conduct this evaluation inside a large-capacity venue such as a stadium or arena to measure performance under sustained high network load. While a venue-type testing was not feasible for this study, it remains under consideration for future work. For this study, Times Square served as a practical proxy, offering high pedestrian density and complex RF conditions. According to pedestrian flow data from timessquarenyc.org, daily foot traffic ranges from 200,000–250,000 visitors within a relatively compact geographic footprint — more than adequate for stressing RAN capacity and producing representative results.

Key Takeaways

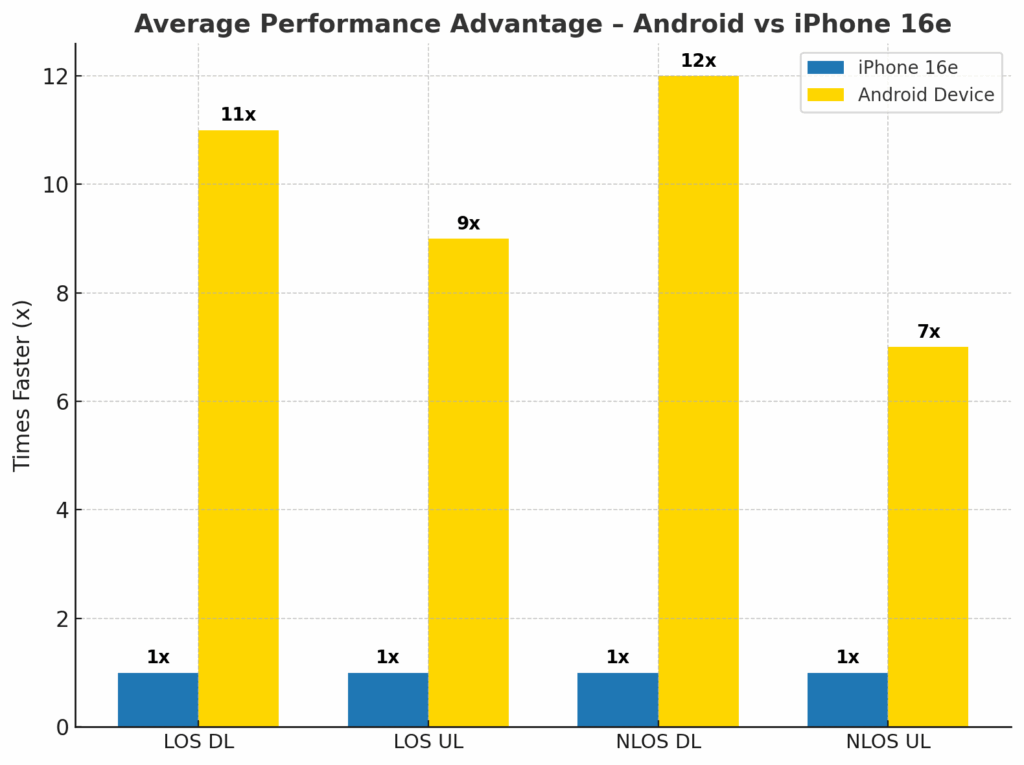

- mmWave Remains a Game-Changer – In Verizon’s dense FR2 deployment, the Snapdragon X75-equipped device achieved over 11X higher DL rates and 7–9X higher UL rates than the iPhone 16e, depending on LOS/NLOS conditions.

- SA 5G Maturity is Evident – All DUTs were scheduled to SA 5G by default, without manual forcing, reflecting significant operational progress by Verizon in Standalone network deployment.

- NR-DC Delivers Massive Gains – Aggregating up to DL 7CC of mmWave with FR1 anchors enabled Android device to sustain multi-gigabit performance in both LOS and challenging NLOS scenarios.

- Device Capability Defines Experience – The iPhone’s FR1-only configuration limited it to mid-band performance, even in spectrum-rich environments with abundant mmWave capacity.

- Uplink Matters – Android device leveraged advanced UL CA and 4CC FR2 uplink to deliver triple-digit UL rates, a capability gap that remains unaddressed in Apple’s first-generation C1 modem.

Testing was conducted in a stationary mode, targeting both line-of-sight (LOS) and non-line-of-sight (NLOS) conditions. Verizon’s coverage in Times Square was exceptional across LTE, sub-6 GHz (FR1), and mmWave (FR2). We observed evolved and matured network deployment as 5G-Standalone seemed ubiquitous in NYC. This makes it easier to realize peak data speeds with FR1 and 7CC FR2. Both SA and NSA were available, but NSA fallback was rare and typically only observed in deep-indoor, edge-of-cell locations.

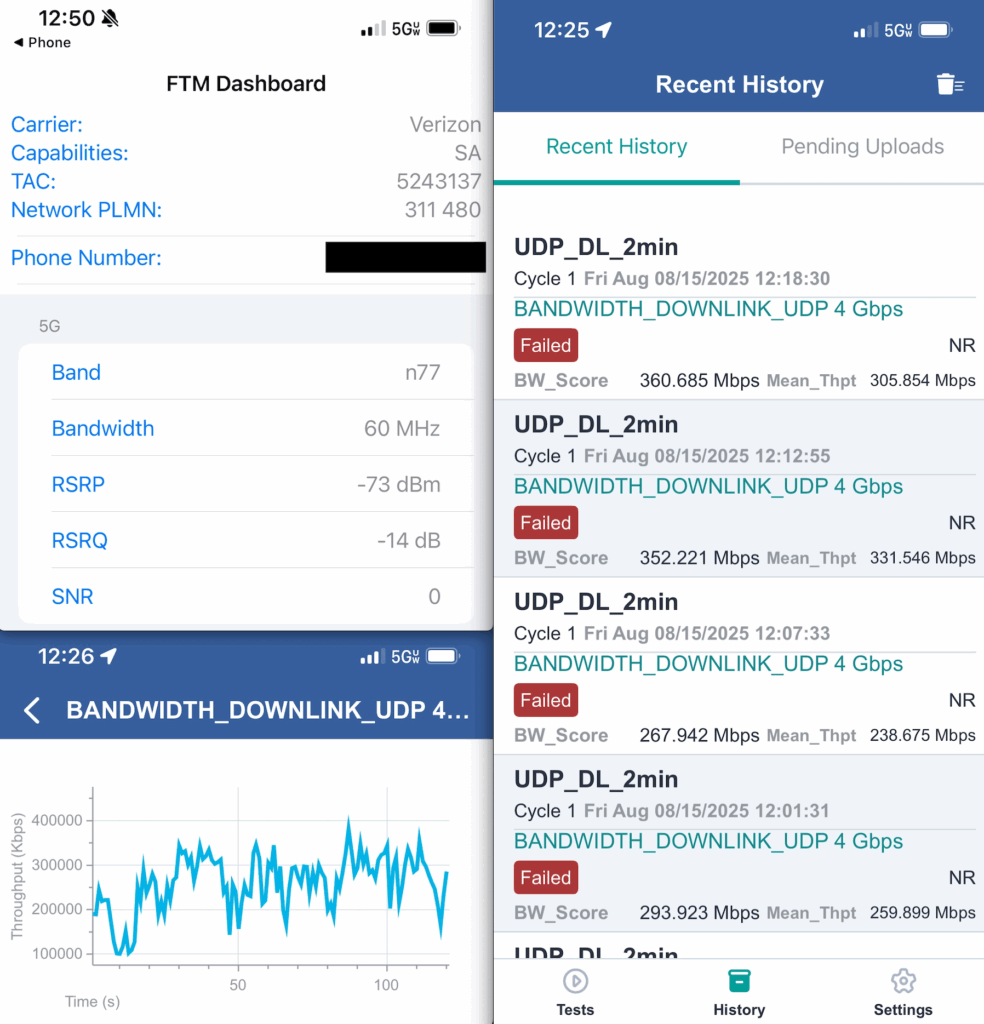

Due to iOS platform restrictions, chipset-level KPIs were unavailable for the iPhone 16e. As a result, our Apple analysis is limited to application-layer throughput measurements and iPhone built-in Field Test app. In contrast, an Android device allowed full modem-level visibility via Qtrun Technologies’ AirScreen platform, with traffic generation and analysis performed using Spirent’s Umetrix Data Server.

Even with these measurement asymmetries, the observed performance delta between the Qualcomm-powered device and Apple’s C1 modem was both significant and consistent across LOS and NLOS test conditions.

A special thanks to Qtrun Technologies for providing AirScreen software for chipset-level analysis and Qualcomm for sponsoring this testing effort and providing access to the Umetrix Data Server (Spirent Communications).

Verizon’s commercial 5G service debuted in 2019 with an mmWave-only deployment in the 28 GHz band (n261). The first two smartphones to support this new air interface — the Motorola Moto Z (via the 5G Moto Mod) and the Samsung Galaxy S10 5G — were both powered by Qualcomm’s first-generation X50 5G modem. At launch, device capability was limited to NSA operation, 4CC carrier aggregation in the downlink, and uplink over LTE with, at best, a single mmWave carrier.

Fast forward six years, and mmWave is now supported by the vast majority of Verizon’s smartphone portfolio. Today’s flagship devices, such as those built on the Qualcomm Snapdragon X80 Modem-RF System, support up to 10CC DL and 4CC UL in FR2. This leap in device capability has materially changed the user experience and RAN capacity utilization — benefits that we were able to quantify in this study. It’s worth noting that no input or logistical support was provided by the operator for this testing.

By default, both devices under test (DUTs) were scheduled to Verizon’s Standalone (SA) 5G core, without any manual network selection. This behavior was consistent across most NYC market locations in our broader two-month campaign, with fallback to NSA or LTE occurring only in deep indoor, edge-of-cell conditions.

Verizon’s SA 5G network in NYC is anchored by two TDD C-band layers in n77 — one 100 MHz wide, the other 60 MHz wide. In recent months, we also observed the addition of a 10 MHz FDD low-band channel in n5, improving indoor SA coverage and bolstering uplink reach. Complementing the FR1 layers, Verizon operates 7CC of FR2 in n261, accessible to the Android device via NR-DC (New Radio Dual Connectivity). In this configuration, FR1 serves as the anchor, while FR2 carriers add significant downlink and uplink capacity.

Throughout our testing, TDD FR1 was utilized 100% of the time, but the difference in FR1 channel widths (100 MHz vs. 60 MHz) contributed to uplink variability on the iPhone 16e which we had no control over. Without FR2 support, the iPhone was inherently constrained in scenarios where the Android device was able to aggregate an FR1 channel with 4CC UL in FR2 — a limitation that consistently manifested in our throughput results.

Test Methodology

Given the compact physical footprint and exceptionally high user density in Times Square, combined with the limited propagation range of mmWave, we structured testing around controlled Line-of-Sight (LOS) and Non-Line-of-Sight (NLOS) scenarios. Each device under test (DUT) was subjected to five runs per scenario. To minimize the influence of live network variability, we employed an interleaved test design in which devices were alternated under identical RF conditions. This ensured that transient factors such as scheduler behavior, user traffic surges, and interference dynamics were evenly distributed across both DUTs. The methodology was applied consistently in both LOS and NLOS scenarios, enabling a more reliable comparison of device performance by minimizing temporal bias inherent to live network testing.

All testing was performed using the Umetrix Data platform, generating sustained two-minute UDP and HTTP traffic sessions at target loads of 4,000 Mbps downlink and 600 Mbps uplink. This approach ensured that results reflected application-layer throughput under conditions designed to saturate available network resources.

The RF environment was characterized by a layered architecture: macro sites providing LTE and FR1 coverage formed the coverage anchor, while a dense grid of mmWave small cells mounted on street-level infrastructure such as light poles delivered the FR2 capacity layer. This deployment model provided an ideal backdrop to evaluate performance differences between LOS and NLOS, as well as the relative strengths and limitations of each DUT in leveraging available spectrum.

Testing Results

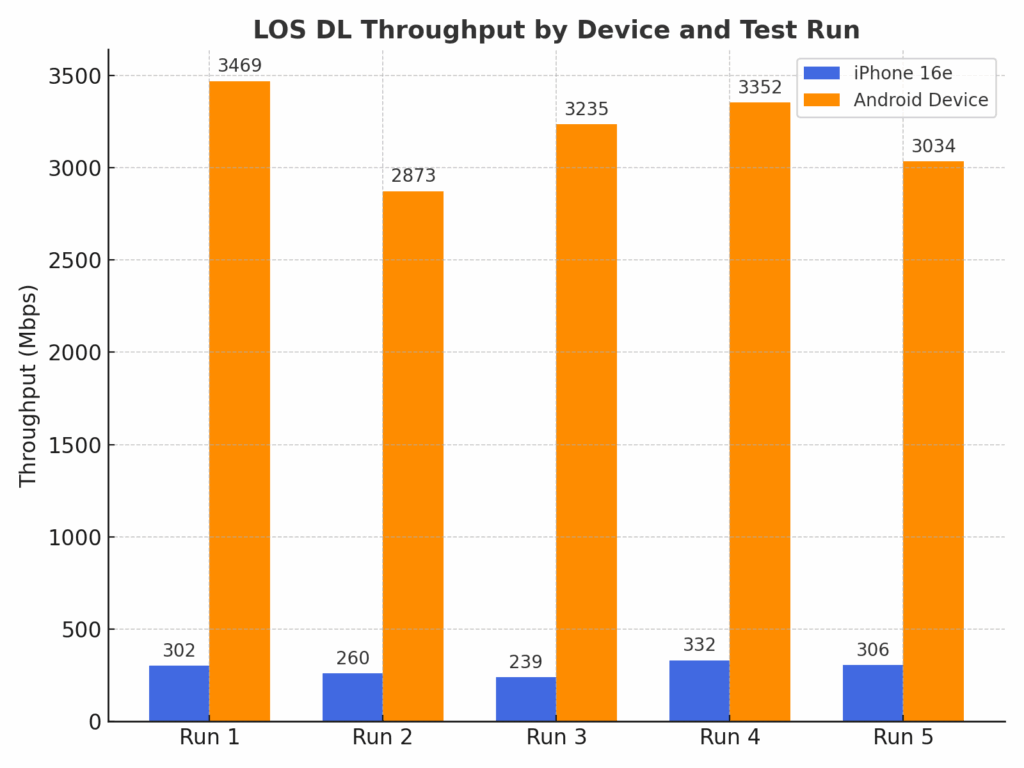

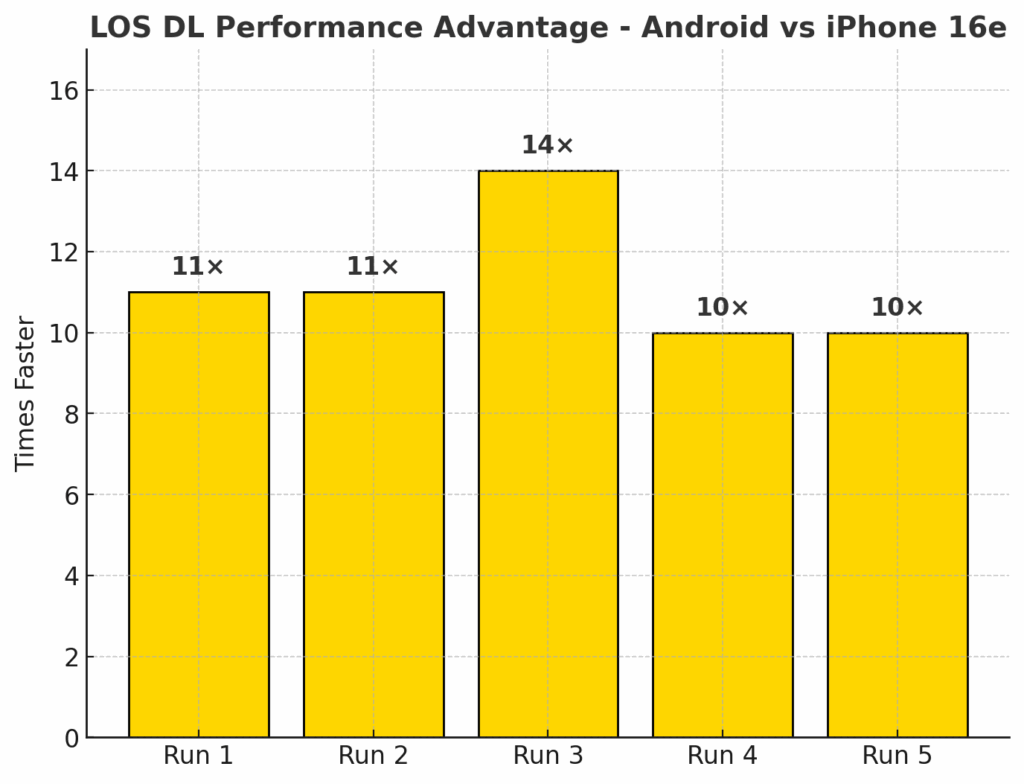

With the interleaving framework in place, we moved into throughput characterization. Performance was evaluated across five independent runs per scenario, capturing both downlink and uplink under LOS and NLOS conditions. The results in the following section represent the outcomes across all runs, isolating device capability from transient network variability. As such, the figures can be interpreted as a reliable indicator of real-world performance in dense, capacity-constrained environments.

In LOS download throughput testing, the iPhone 16e averaged 288 Mbps, while Android device averaged 3,193 Mbps. This means the Android device delivered performance 11X faster than the iPhone. The results clearly illustrate the substantial advantage of the Snapdragon-powered Android device in leveraging available network resources for peak performance in LOS scenarios. The Android device was able to leverage a single channel of FR1 (100MHz or 60MHz) and 7CC of mmWave, while the iPhone had access to two FR1 channels.

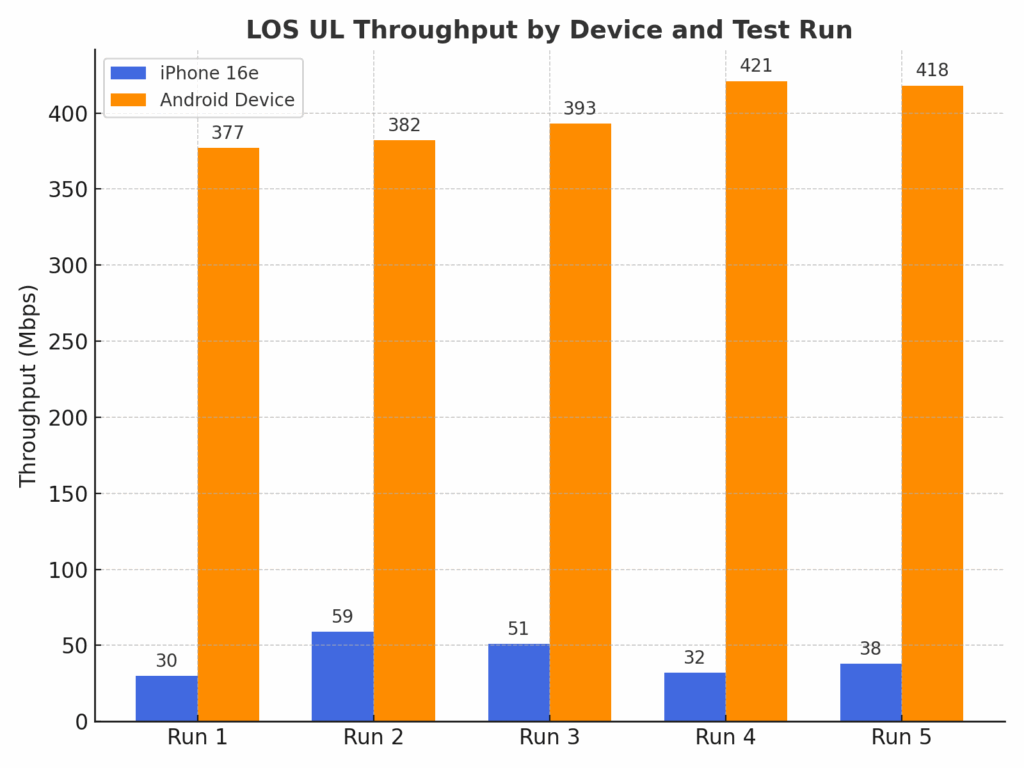

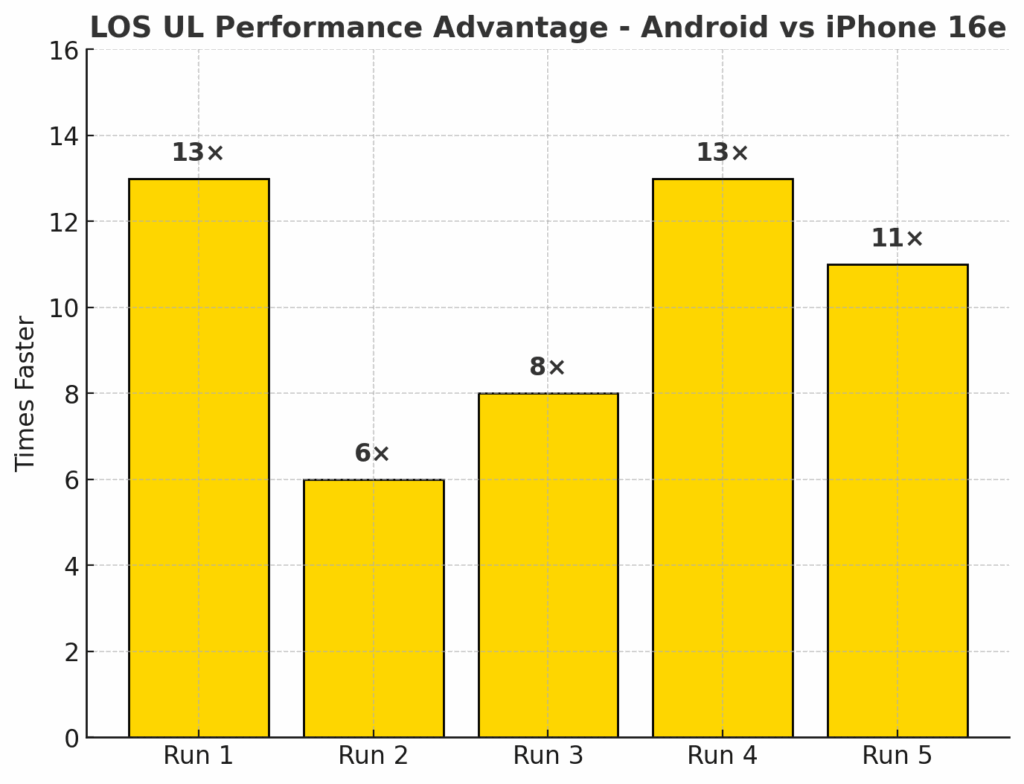

In LOS upload testing, the iPhone 16e averaged 42 Mbps, compared to 398 Mbps for the Android device. This represents over a 9X advantage for the Android device. These results highlight the substantial uplink performance gap between Apple’s first-generation C1 modem and Qualcomm’s Snapdragon X75 platform. An android device consistently leveraged advanced uplink carrier aggregation and higher spectral efficiency to deliver dramatically higher throughput, underscoring their ability to fully utilize Verizon’s 5G SA uplink capabilities in ideal LOS conditions. Here the Android device was able to attach to a single FR1 and 4 FR2 channels, while the iPhone could only use a single FR1 channel.

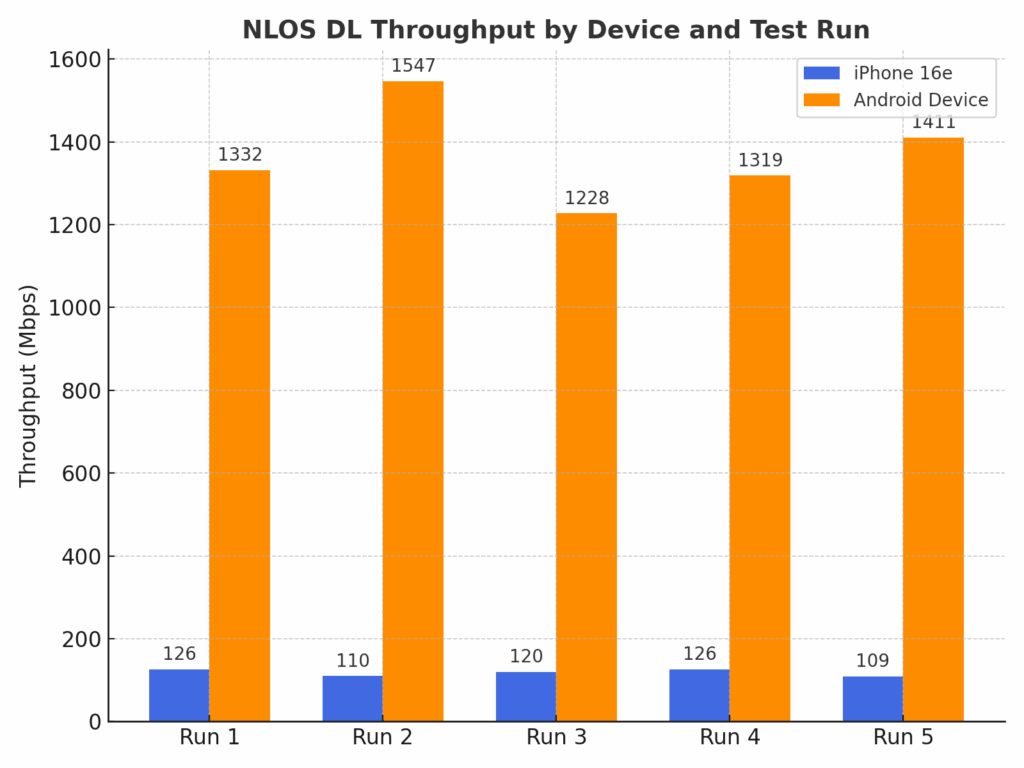

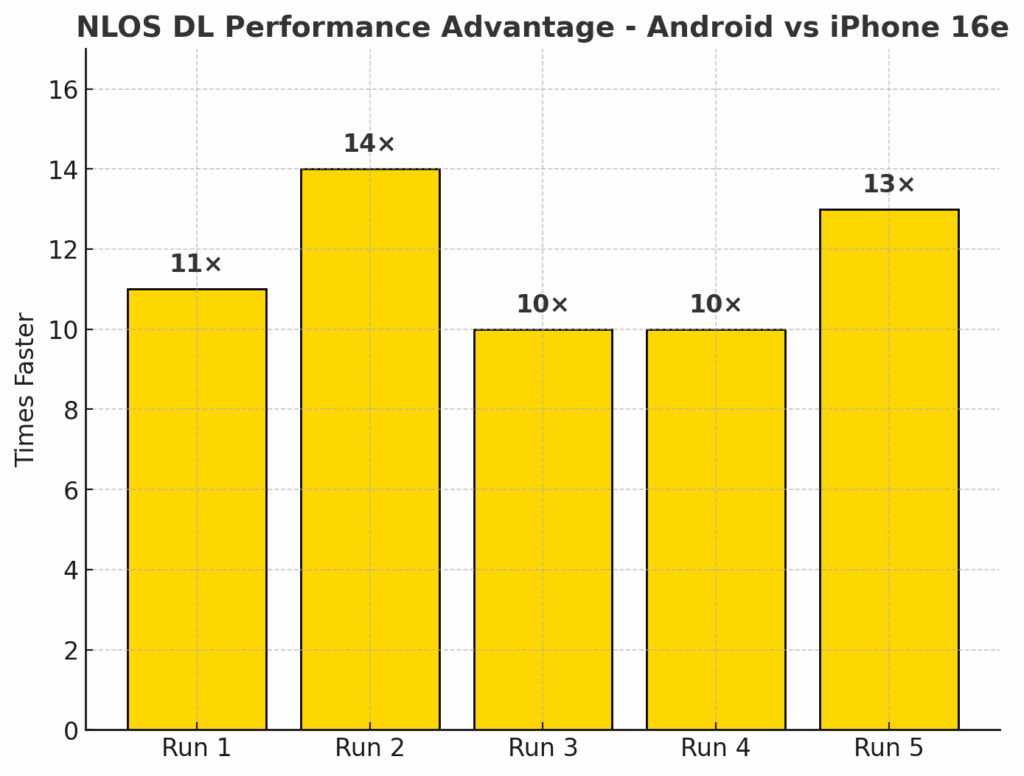

In NLOS download testing, the iPhone 16e averaged 118 Mbps, while the Android device reached 1,367 Mbps. This translates to the Android device being 12X faster than the iPhone 16e in these less than optimal RF conditions. The performance gap was consistent across all five runs, underscoring the Snapdragon X75 Modem-RF Systems’ ability to sustain high throughput even without direct line-of-sight to the mmWave node. By contrast, the iPhone was unable to take advantage of the full mmWave capacity in these scenarios, highlighting a substantial real-world performance disadvantage in dense urban environments where NLOS links are common.

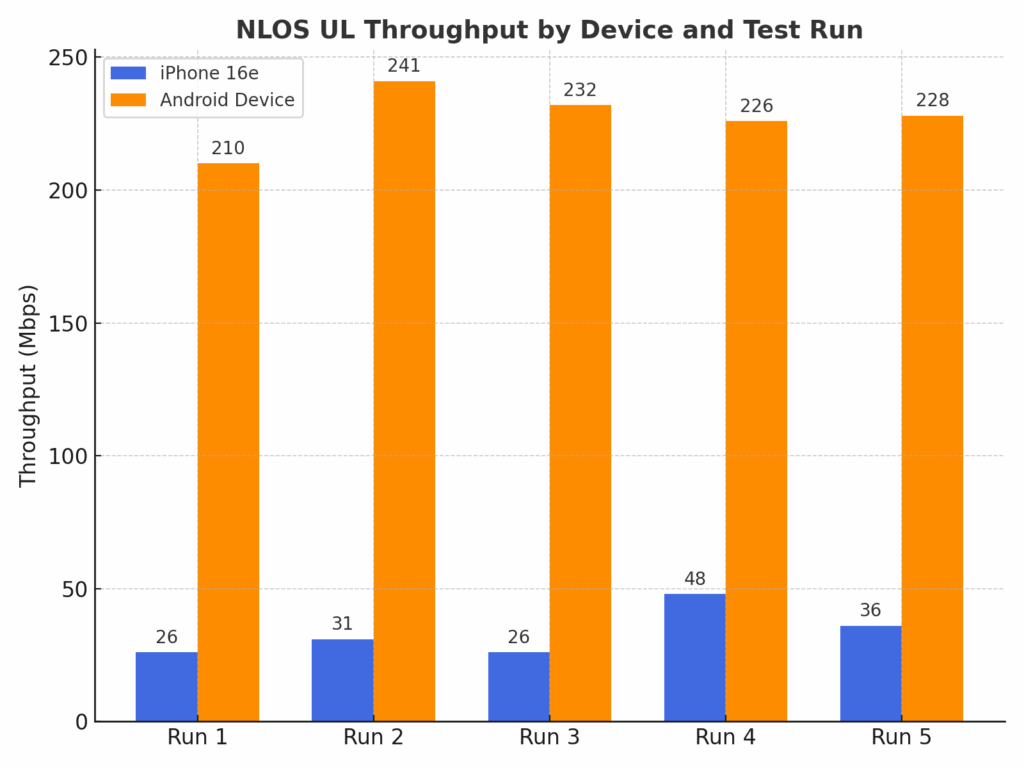

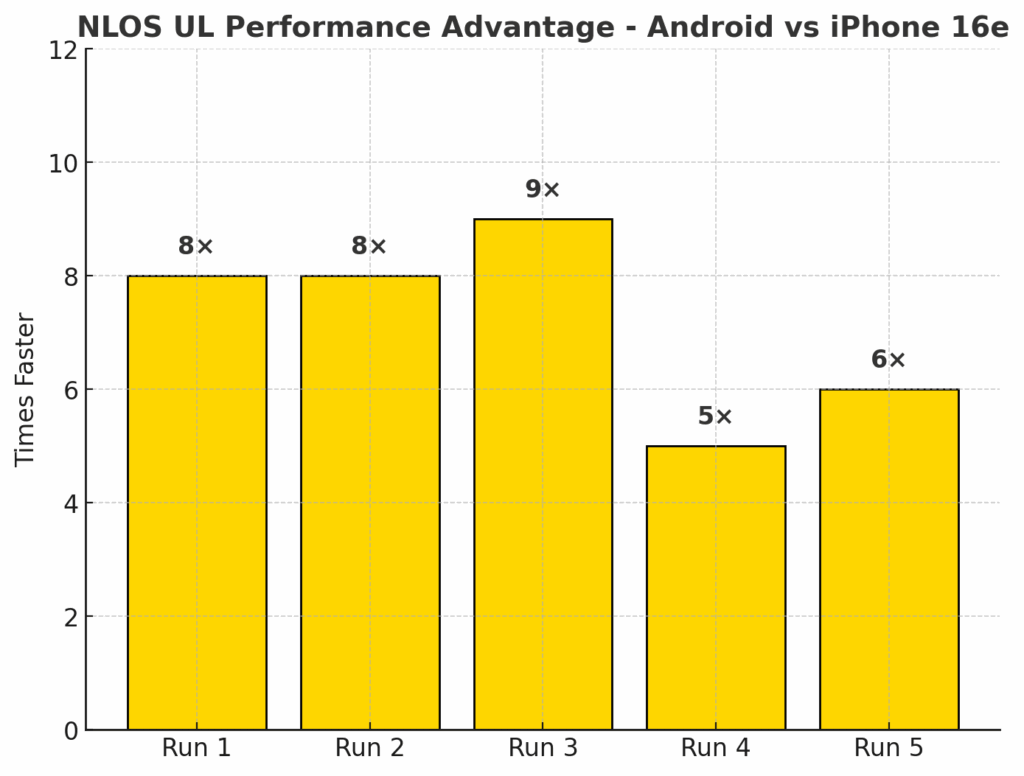

In NLOS upload throughput testing, the iPhone 16e averaged 33 Mbps, while the Android device reached 227 Mbps. This translates to the Android device being 7X faster than the iPhone 16e, again underscoring the significant uplink performance advantage of the Qualcomm-powered device under challenging RF conditions.

Conclusion

The results from this comparative evaluation clearly demonstrate the tangible benefits of mmWave when deployed in a mature network environment and leveraged by devices with the modem-RF capability to fully exploit it. Verizon’s extensive FR2 footprint in Times Square, combined with a Standalone 5G core and wideband FR1 resources, created an ideal testbed for quantifying the performance delta between the FR1-only iPhone 16e and an Android device capable of accessing all available network resources.

In both LOS and NLOS scenarios, the Snapdragon-powered Android device delivered order-of-magnitude throughput advantages over the iPhone 16e, with the gap widening significantly in uplink performance. These gains were driven by the ability to aggregate substantial mmWave capacity—up to DL 7CC and UL 4CC in FR2—with FR1 anchors. In contrast, the iPhone’s FR1-only configuration limited peak rates to what could be achieved over C-Band spectrum alone, even in spectrum-rich environments where mmWave was readily available.

The LOS results highlighted the raw capacity advantage of FR2, with the Android device achieving sustained multi-gigabit rates in the downlink and triple-digit in the uplink, while the iPhone plateaued at sub-50 Mbps uplink and sub-300 Mbps downlink despite LOS RF conditions. NLOS testing reinforced these findings—while mmWave performance naturally declined without direct visibility to the serving node, the X75 maintained multi-gigabit downlink and triple-digit uplink rates by capitalizing on beamforming, advanced link adaptation, and robust dual connectivity. The iPhone, by comparison, delivered performance in line with mid-band-only deployments, illustrating the opportunity cost of excluding FR2 support.

From a network perspective, Verizon’s scheduling of all DUTs to Standalone 5G by default, without manual intervention, underscores the operator’s progress in SA maturity and its ability to seamlessly integrate mmWave into the broader RAN architecture. The addition of the 10 MHz FDD n5 carrier to complement the dual n77 TDD layers further strengthens indoor coverage and uplink reliability, particularly for devices without FR2 access.

For end users, the practical takeaway is clear: in markets with dense mmWave deployments, device capability remains the single most important determinant of the 5G experience. For the industry, this study is a reminder that while FR2 adoption has been gradual, its benefits—particularly in capacity-limited, high-traffic environments—are substantial and measurable. As SA 5G deployments mature and FR2 integration becomes more seamless, we expect the performance gap between mmWave-capable and FR2-limited devices to remain significant, if not widen further.